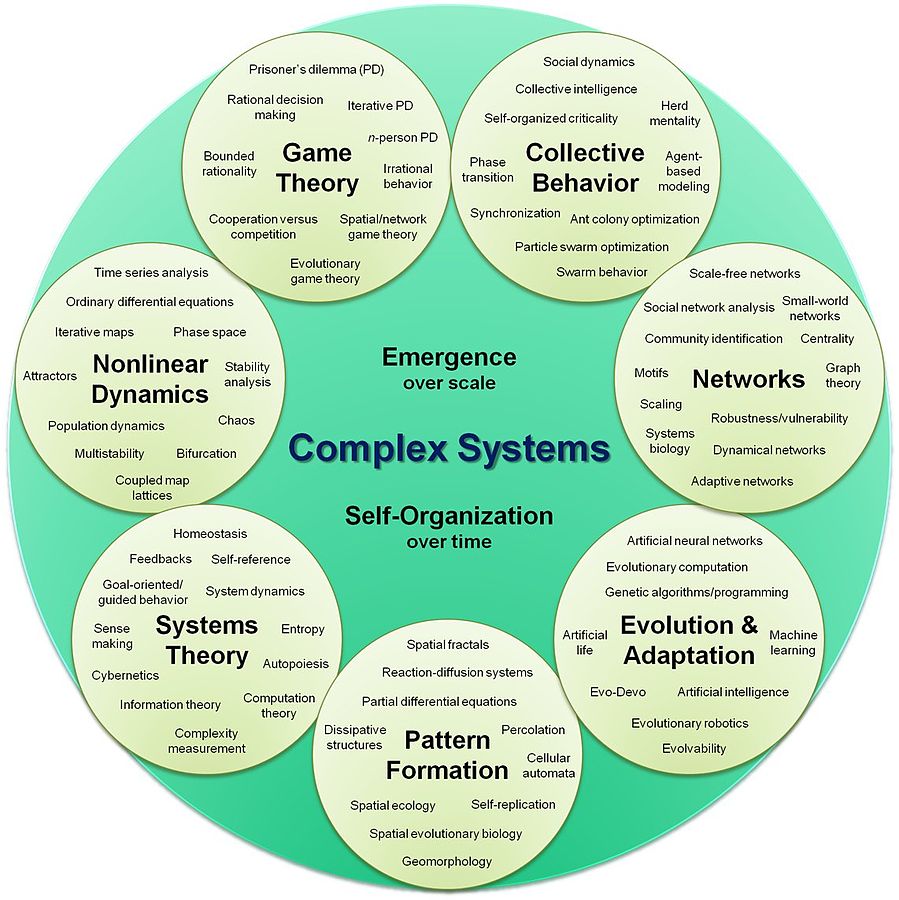

Artificial intelligence (AI): is intelligence exhibited by machines, rather than humans or other animals

In the open letter, the specialists warn the review conference of the convention on conventional weapons that this arms race threatens to usher in the “third revolution in warfare” after gunpowder and nuclear arms. The founders wrote: “Once developed, lethal autonomous weapons will permit armed conflict to be fought at a scale greater than ever, and at timescales faster than humans can comprehend. These can be weapons of terror, weapons that despots and terrorists use against innocent populations, and weapons hacked to behave in undesirable ways...We do not have long to act. Once this Pandora’s box is opened, it will be hard to close.” AI experts have previously warned that AI technology has reached a point where the deployment of autonomous weapons is feasible within years, rather than decades. While AI can be used to make the battlefield a safer place for military personnel, experts fear that offensive weapons that operate on their own would lower the threshold of going to battle and result in greater loss of human life. The letter, launching at the opening of the International Joint Conference on Artificial Intelligence (IJCAI) in Melbourne, Australia on Monday, August 21, 2017, has the backing of high-profile figures in the robotics field and strongly stresses the need for urgent action, after the UN was forced to delay a meeting that was due to start Monday to review the issue. The founders call for “morally wrong” lethal autonomous weapons systems to be added to the list of weapons banned under the UN’s convention on certain conventional weapons brought into force in 1983, which includes chemical and intentionally blinding laser weapons. An Open Letter Launched in 2015 This is not the first time the IJCAI, one of the world’s leading AI conferences, has been used as a platform to discuss lethal autonomous weapons systems. In 2015, the conference was used to launch an open letter signed by thousands of AI and robotics researchers including Musk and Stephen Hawking similarly calling for a ban, which helped push the UN into formal talks on the technologies. This open letter was announced at the opening of the IJCAI 2015 conference on July 28, 2015: "Autonomous weapons select and engage targets without human intervention. They might include, for example, armed quadcopters that can search for and eliminate people meeting certain pre-defined criteria, but do not include cruise missiles or remotely piloted drones for which humans make all targeting decisions. Artificial Intelligence (AI) technology has reached a point where the deployment of such systems is — practically if not legally — feasible within years, not decades, and the stakes are high: autonomous weapons have been described as the third revolution in warfare, after gunpowder and nuclear arms. Many arguments have been made for and against autonomous weapons, for example that replacing human soldiers by machines is good by reducing casualties for the owner but bad by thereby lowering the threshold for going to battle. The key question for humanity today is whether to start a global AI arms race or to prevent it from starting. If any major military power pushes ahead with AI weapon development, a global arms race is virtually inevitable, and the endpoint of this technological trajectory is obvious: autonomous weapons will become the Kalashnikovs of tomorrow. Unlike nuclear weapons, they require no costly or hard-to-obtain raw materials, so they will become ubiquitous and cheap for all significant military powers to mass-produce. It will only be a matter of time until they appear on the black market and in the hands of terrorists, dictators wishing to better control their populace, warlords wishing to perpetrate ethnic cleansing, etc. Autonomous weapons are ideal for tasks such as assassinations, destabilizing nations, subduing populations and selectively killing a particular ethnic group. We therefore believe that a military AI arms race would not be beneficial for humanity. There are many ways in which AI can make battlefields safer for humans, especially civilians, without creating new tools for killing people. Just as most chemists and biologists have no interest in building chemical or biological weapons, most AI researchers have no interest in building AI weapons — and do not want others to tarnish their field by doing so, potentially creating a major public backlash against AI that curtails its future societal benefits. Indeed, chemists and biologists have broadly supported international agreements that have successfully prohibited chemical and biological weapons, just as most physicists supported the treaties banning space-based nuclear weapons and blinding laser weapons. In summary, we believe that AI has great potential to benefit humanity in many ways, and that the goal of the field should be to do so. Starting a military AI arms race is a bad idea, and should be prevented by a ban on offensive autonomous weapons beyond meaningful human control." ReferencesFuture of Life Institute. (2017). Open Letter on Autonomous Weapons - Future of Life Institute. [online] Available at: https://futureoflife.org/open-letter-autonomous-weapons [Accessed 29 Aug. 2017].

Gibbs, S. (2017). Elon Musk leads 116 experts calling for outright ban of killer robots. [online] the Guardian. Available at: https://www.theguardian.com/technology/2017/aug/20/elon-musk-killer-robots-experts-outright-ban-lethal-autonomous-weapons-war [Accessed 29 Aug. 2017].

0 Comments

|

This feed contains research, news, information, observations, and ideas at the level of the world.

Archives

May 2024

Categories

All

|

RSS Feed

RSS Feed